AI SaaS solutions have moved from experimental to production-ready across most business functions. In 2025, the question is no longer whether to integrate AI into your SaaS stack — it is which tools deliver real ROI and which are feature theatre.

This post covers how AI SaaS platforms work, what benefits are real versus overhyped, how to evaluate vendors, and how to implement these tools without breaking your existing operations.

If you are building an AI-enhanced SaaS product rather than buying one, see the SaaS product lifecycle guide and the SaaS MVP development framework for how to scope and ship that kind of product.

What "AI-enhanced SaaS" actually means in practice

The term gets applied to everything from a chatbot widget to a full ML inference pipeline. In practice, it means one of a few concrete things:

AI in intake flows: A form that uses an LLM to classify the user's input and route them appropriately, or pre-populate fields based on what they type. Saves support time. Easy to implement via API call.

Automated document processing: Upload a contract or invoice, extract structured data, flag anomalies. This is one of the highest-ROI AI integrations available today — the alternative is manual data entry.

Smart notifications: Instead of rule-based triggers ("send email if no login for 7 days"), use a model to determine when a user is at risk of churning based on behaviour patterns. More precise, fewer false positives.

Content and copy assistance: Generate first drafts of reports, summaries, or messages scoped to your product's context. Users edit, not generate from scratch.

What it does not mean: an "AI insights" dashboard tab that shows charts nobody opens, or a chatbot that answers generic questions your existing help docs already cover.

Where AI adds real value in SaaS

Organized by what actually works in production:

Content and copy generation: Summarizing user-generated content, drafting templated outreach, generating descriptions from structured data. Works well when the output is reviewed before use. Fails when it runs unsupervised on critical paths.

Classification and tagging: Categorizing support tickets, flagging content policy violations, tagging uploaded files by type or topic. High reliability. Measurable accuracy. Easy to evaluate.

Summarization: Condensing long documents, meeting transcripts, or activity logs into actionable summaries. Users adopt this fast because the alternative (reading everything) is clearly worse.

Anomaly detection: Flagging unusual patterns in usage, transactions, or sensor data. Especially valuable in fintech, health tech, and logistics SaaS where edge cases have real consequences.

Search: Semantic search over your product's data is consistently one of the highest-engagement AI features. Users find it immediately useful. The implementation is well-understood — embeddings + vector store + retrieval.

Where AI is oversold

Chatbots that don't know your product: A chatbot backed by a general-purpose LLM without retrieval-augmented generation on your actual documentation is a support liability, not an asset. It will hallucinate answers and route users worse than a static FAQ.

"AI insights" dashboards nobody reads: Adding an LLM-generated summary to a dashboard nobody opens does not make the dashboard useful. The problem is usually that the underlying data is not actionable — AI does not fix that.

LLM calls for simple conditional logic: If the decision tree is a few if-else branches, do not wrap it in an LLM call. It adds latency, cost, and non-determinism to a problem that does not require any of those.

Recommendation engines for small catalogues: If your product has 50 items, a simple sort by popularity outperforms any ML recommendation system and requires zero infrastructure.

Architecture patterns for AI-enhanced SaaS

Three practical choices when adding AI to a product:

External API call (OpenAI, Anthropic, Gemini): The right default. Low upfront investment, fast iteration, pay-per-use pricing. The tradeoff is data leaving your infrastructure and latency from network round-trips. Acceptable for most use cases. Not acceptable for regulated data (healthcare, finance) without explicit data processing agreements.

Purpose-built tools: Services like AWS Textract for document extraction, Google Document AI for structured forms, or Azure Cognitive Services for specific tasks. Better accuracy than general-purpose LLMs for their specific domains. Worth evaluating when you have a defined, narrow task.

Fine-tuning or local model: The right choice when general models perform poorly on your domain-specific language, when you need predictable inference costs at scale, or when data residency requirements prohibit external APIs. High upfront cost. Only justified after you have proven the use case with an external API first.

The build vs buy decision

Use an external LLM API when: you are still discovering what users actually want from AI features, the feature is not a core differentiator, or you need to ship in weeks not months.

Use a purpose-built AI service when: the task is well-defined (document OCR, speech-to-text, translation), you need SLA guarantees, or the general-purpose LLM accuracy is insufficient for the task.

Build your own pipeline when: you have millions of inference calls per month that make API costs untenable, your training data is proprietary and gives you a real accuracy edge, or your compliance requirements prohibit third-party data processing.

Most early-stage SaaS products should stay in the first category until they hit a specific reason to move.

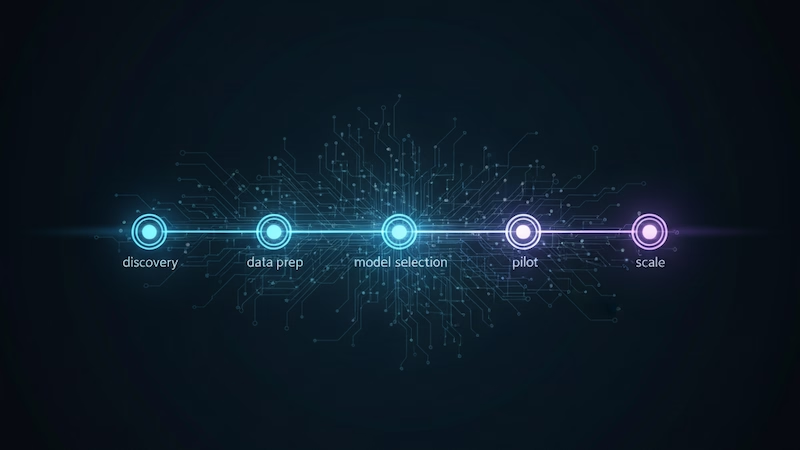

How AI affects SaaS MVP scope and timeline

Adding AI to an MVP almost always increases scope. The extent depends on what role AI plays.

If AI is a supporting feature (e.g., auto-tagging uploads, summarizing notes), expect 2–4 weeks of additional scope for integration, error handling, and fallback behaviour when the model returns unexpected output.

If AI is a core feature (e.g., the product is an AI writing assistant, an AI scheduling tool), the scope and timeline double at minimum. The core product loop depends on model reliability, which requires significantly more testing than deterministic logic.

Key scoping questions: Does the AI feature have a graceful fallback if the API is down? What happens when the model returns incorrect output — does the user see it, or is there a human review step? How do you measure whether the AI feature is actually working?

For practical frameworks on scoping AI SaaS products, see the SaaS MVP guide and MVP timeline guide.

Evaluation checklist before adding AI

Before committing to an AI feature in your SaaS product, work through these questions:

Is the core product working without AI? AI should enhance a product that already has users and validated use cases. Adding AI to fix low engagement is rarely the right lever.

Can AI fail gracefully? Every AI integration needs a fallback. If the LLM is unavailable, the feature should degrade cleanly — either showing cached results, showing nothing, or routing to manual handling. It should not break the product.

Do users understand why AI made a decision? In sensitive contexts (financial decisions, medical triage, hiring), users and regulators need to understand why the system produced a result. Black-box LLM outputs create compliance exposure. Design the explainability before you build.

Can you measure accuracy? "The AI feels right" is not an evaluation metric. Before shipping, define what good output looks like and how you will track when the model degrades. Model drift is real — outputs that worked well at launch can degrade as user behaviour or the underlying model changes.

Is the data pipeline production-ready? AI features in demos use clean, hand-crafted inputs. In production, users submit malformed data, edge cases, and adversarial inputs. Build the data cleaning and validation layer before the AI layer.

Cost structure for AI-enhanced SaaS

The main cost drivers in AI-enhanced SaaS products:

Inference costs: Per-token or per-request charges for LLM API calls. These scale directly with usage. GPT-4o runs roughly $2.50/1M input tokens and $10/1M output tokens (as of early 2025). For high-volume features, this adds up quickly — model selection matters.

Embedding storage: If you're running semantic search, you're storing and querying vector embeddings. Pinecone, pgvector, Supabase vectors all have cost at scale.

Latency overhead: AI API calls add 500ms–3s of latency depending on model and payload size. This affects UX design — synchronous AI features frustrate users, async with notifications is usually better.

Evaluation and monitoring: LLM output quality needs ongoing monitoring. Platforms like LangSmith, Helicone, or custom logging add operational cost but are necessary for catching regressions.

Future direction

The 2025 AI SaaS landscape is moving toward smaller, faster, cheaper models that run closer to the data — in the browser, at the edge, or on-device. This matters for SaaS builders because it changes the cost and latency calculus on features that previously required server-side LLM calls.

Multi-modal models (handling text, images, audio, documents in one call) are reducing the number of specialized tools needed for document processing workflows.

Agentic patterns — where models take sequences of actions rather than responding to single prompts — are moving out of demos and into production for specific workflows: customer support escalation, automated code review, structured data extraction pipelines.

The builders who will use these effectively are not the ones chasing every new model release. They are the ones who have clear product problems, measurable success criteria, and infrastructure that can swap models without rewriting the product.

Building something that needs AI? Start a chat — I work with technical founders on scoping AI features into SaaS products and can give you a straight read on what's worth building and what's not.